|

Note that not all solids have smaller entropy values than all liquids nor all liquids smaller values than all gases. The arrangement of particles in a solid, a liquid and a gasįurther values can be obtained from The RSC Electronic Data Book The key point to remember is that entropy is a figure that measures randomness and, as you might expect, gases, where the particles could be anywhere, tend have greater entropies than liquids which tend have greater entropies than solids, where the particles are very regularly arranged, You can see this general trend from the animations of the three states. Notice the difference between the units of entropy and those of enthalpy (energy), kilojoules per mole (kJ mol ‑1). Some examples of calculating the ln of large numbers might help students to see the scaling effect.)Įntropies are measured in joules per kelvin per mole (J K -1 mol -1). (Don’t let students worry about ‘ln’, just get them to use the correct button on their calculators. Ln is the natural logarithm, which also has the effect of scaling a vast number to a small one – the natural log of 10 23 is 52.95, for example.

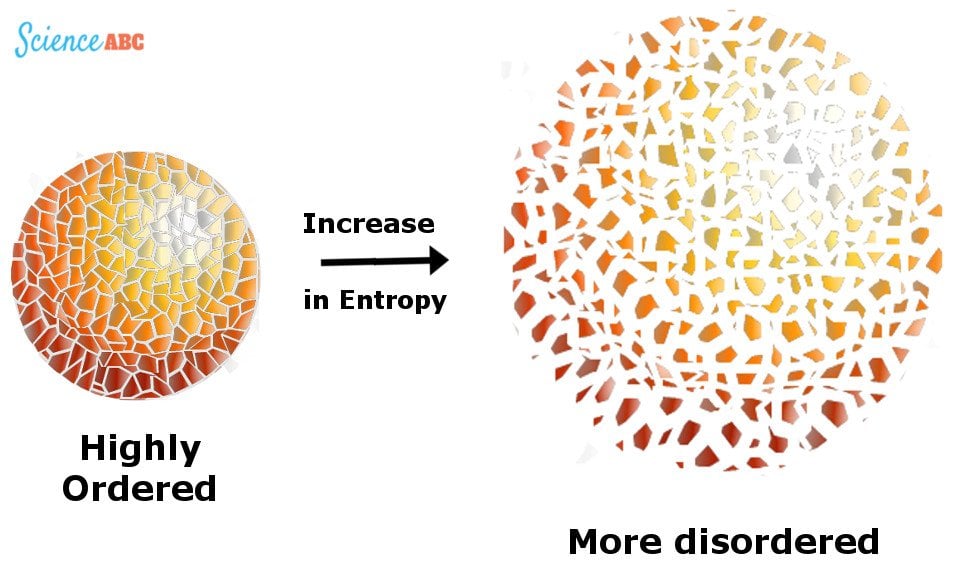

There are certain mathematical advantaged to using this base number. Thus, entropy has the units of energy unit per Kelvin, J K -1. Entropy is the amount of energy transferred divided by the temperature at which the process takes place. Just as logarithms to the base 10 are derived from 10 x, natural logarithms are derived from the exponent of the function e x, where e has the value 2.718. When a system receives an amount of energy q at a constant temperature, T, the entropy increase D S is defined by the following equation. In the expression S = k ln W it has the effect of scaling the vast number W to a smaller, more manageable, number. k is a constant called Boltzmann’s constant which has the value 1.38 x 10 ‑23 J K ‑1. Where W is the number of ways of arranging the particles that gives rise to a particular observed state of the system. (By a ‘state’, we mean an observable situation, such as a particular number of particles in each of two boxes.) Boltzmann’s constant is a measure of the amount the energy of a single particle (such as an atom or molecule) of a gas increases for a 1 K increase in temperature.Īs the numbers of particles increase, the number of possible arrangements in any particular state increases astronomically so we need a scaling factor to produce numbers that are easy to work with. It is also possible to use the free energy of Gibbs (ΔG) and enthalpy (ΔH) to find ΔS.Scientists have a mathematical way of measuring randomness – it is called entropy and it is related to the number of arrangements of particles (such as molecules) that lead to a particular state. ΣΔS products refers to the sum of the ΔS products,Īnd ΣΔS reactants refers to the sum of the ΔS reactantsģ. Where ΔS rxn refers to the standard entropy values, If the process reaction is known, we can use a table of standard entropy values to find ΔSrxn. When the process occurs at a constant temperature, the entropy would be:Ģ. There are several equations to calculate the entropy:ġ. It contains the system entropy and the entropy of the surroundings. Also, scientists have concluded that the process entropy would increase in a random process. The entropy of the solid (the particles are tightly packed) is more than the gas (particles are free to move).

It is the thermodynamic function used to calculate the system's instability and disorder. While reversible adiabatic expansion is isentropic, it is not isentropic to irreversible adiabatic expansion. The process is defined as the quantity of heat generated during the entropy change and is reversibly divided by the absolute temperature. Consider a system consisting of two objects, each containing two particles, and two units of thermal energy (represented as ) in Figure 16.9.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed